The UK government has decided against rushing new laws on AI training and copyright, opting instead for continued evidence gathering and international monitoring amid deep industry divisions and ongoing legal uncertainty.

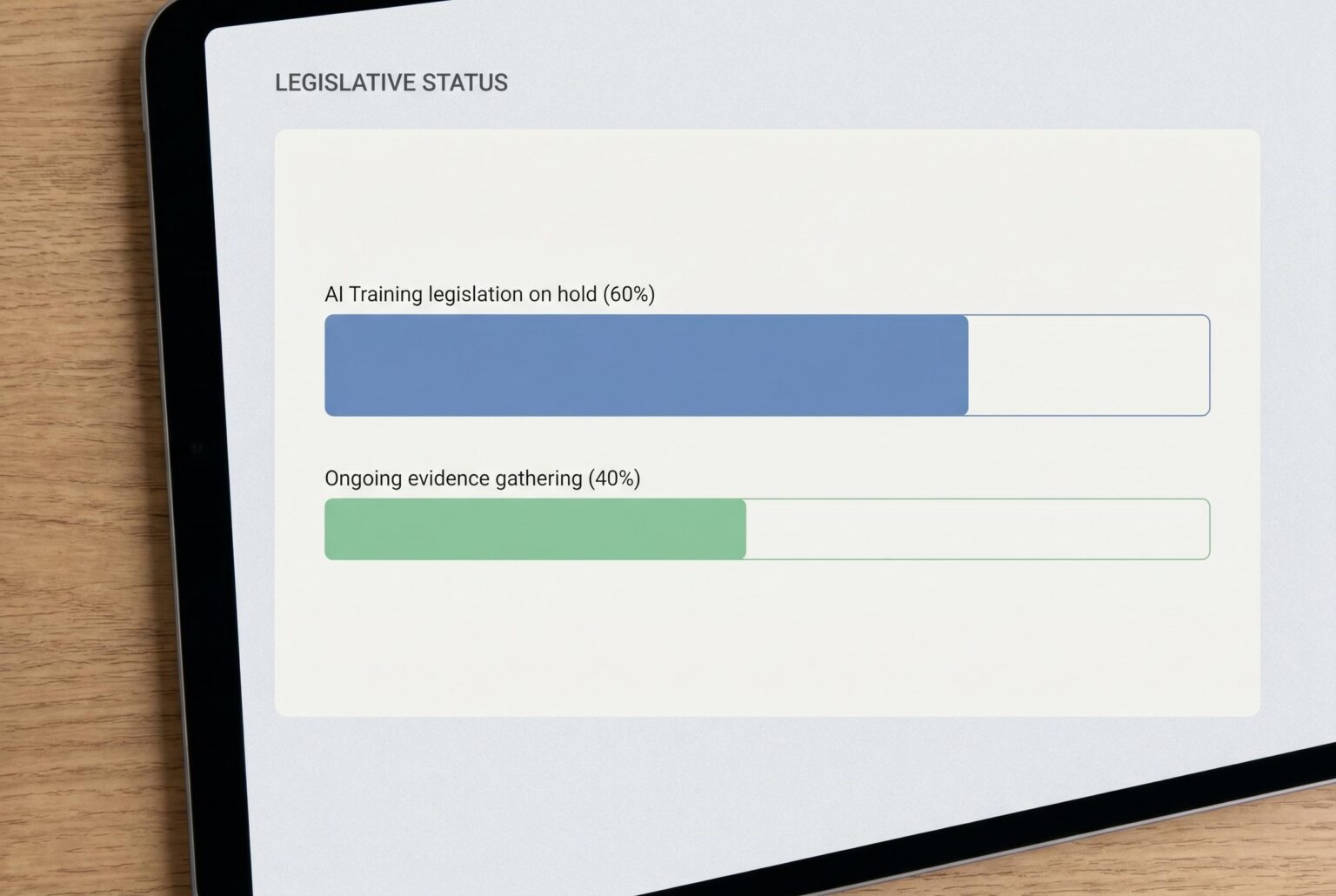

The UK government has stepped back from a previously favoured opt-out model for the use of copyright works in training artificial intelligence, leaving the current legal framework unchanged while it gathers more evidence and watches how the courts and other jurisdictions develop the law. According to the Department for Science, Innovation and Technology’s report published on 18 March 2026, the consultation exposed deep divisions between creative rights holders, who want consent and payment at the centre of any regime, and AI developers, who argue that compulsory licensing could slow innovation and weaken Britain’s position in the sector.

The report does not resolve the core question of whether training an AI system on protected works amounts to infringement, and that uncertainty continues to be tested in litigation. Rather than rushing to legislate, ministers said they would continue evidence gathering, technical work and monitoring of international developments, a shift that legal commentators have described as a reset in policy direction.

Transparency emerged as one of the few areas where there was broad convergence. The consultation pointed to the need for greater, but proportionate, clarity over training data, the provenance of outputs and accountability mechanisms, with the government noting that these questions overlap with data protection, consumer protection and wider AI governance rules.

On computer-generated works, the government said most respondents did not support copyright protection for works created solely by AI, while leaving open the possibility that material produced with meaningful human involvement should continue to qualify. The report also suggests that labelling of AI-generated content is gaining acceptance, although ministers stopped short of proposing new mandatory rules and said they would instead monitor best practice and international standards.

The issue of digital replicas and deepfakes was treated more cautiously. The government acknowledged growing concern about the unauthorised use and commercialisation of a person’s likeness in a system that lacks a single, dedicated image-rights regime, but said it had no immediate plan to introduce personality rights. Instead, it will gather views on whether the current patchwork of laws remains fit for purpose. For rights holders, the practical message is to keep tightening access controls and contractual protections; for AI developers, the report is a reminder to strengthen governance, record-keeping and risk controls while the law continues to evolve.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on 23 April 2026, which is within a week of the UK government’s report on copyright and artificial intelligence published on 18 March 2026. ([gov.uk](https://www.gov.uk/government/publications/report-and-impact-assessment-on-copyright-and-artificial-intelligence/report-on-copyright-and-artificial-intelligence?utm_source=openai)) The content appears to be original and not recycled from other sources. However, the article references the UK government’s report, indicating that it is based on existing information rather than presenting new developments.

Quotes check

Score:

7

Notes:

The article does not include direct quotes. It paraphrases information from the UK government’s report and other sources. While the paraphrasing is consistent with the original sources, the lack of direct quotes makes it difficult to verify the exact wording and context of the information presented.

Source reliability

Score:

6

Notes:

The article is published on IP Law Watch, a platform that provides legal insights and commentary. While it appears to be a reputable source within its niche, it is not a major news organisation. The article references the UK government’s report and other reputable sources, but the analysis is from a single perspective, which may introduce bias.

Plausibility check

Score:

8

Notes:

The claims made in the article align with the UK government’s report on copyright and artificial intelligence. ([gov.uk](https://www.gov.uk/government/publications/report-and-impact-assessment-on-copyright-and-artificial-intelligence/report-on-copyright-and-artificial-intelligence?utm_source=openai)) The discussion on the government’s position regarding AI training and copyright is consistent with the report’s findings. However, the article’s interpretation of the government’s stance may reflect the author’s perspective, which could influence the presentation of information.

Overall assessment

Verdict (FAIL, OPEN, PASS): PASS

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article provides a summary and analysis of the UK government’s report on copyright and artificial intelligence. While it is based on publicly available sources and does not originate from behind a paywall, the lack of direct quotes and reliance on a single source for analysis raise concerns about the independence and verifiability of the information presented. Editors should exercise caution and consider seeking additional sources to confirm the details provided.