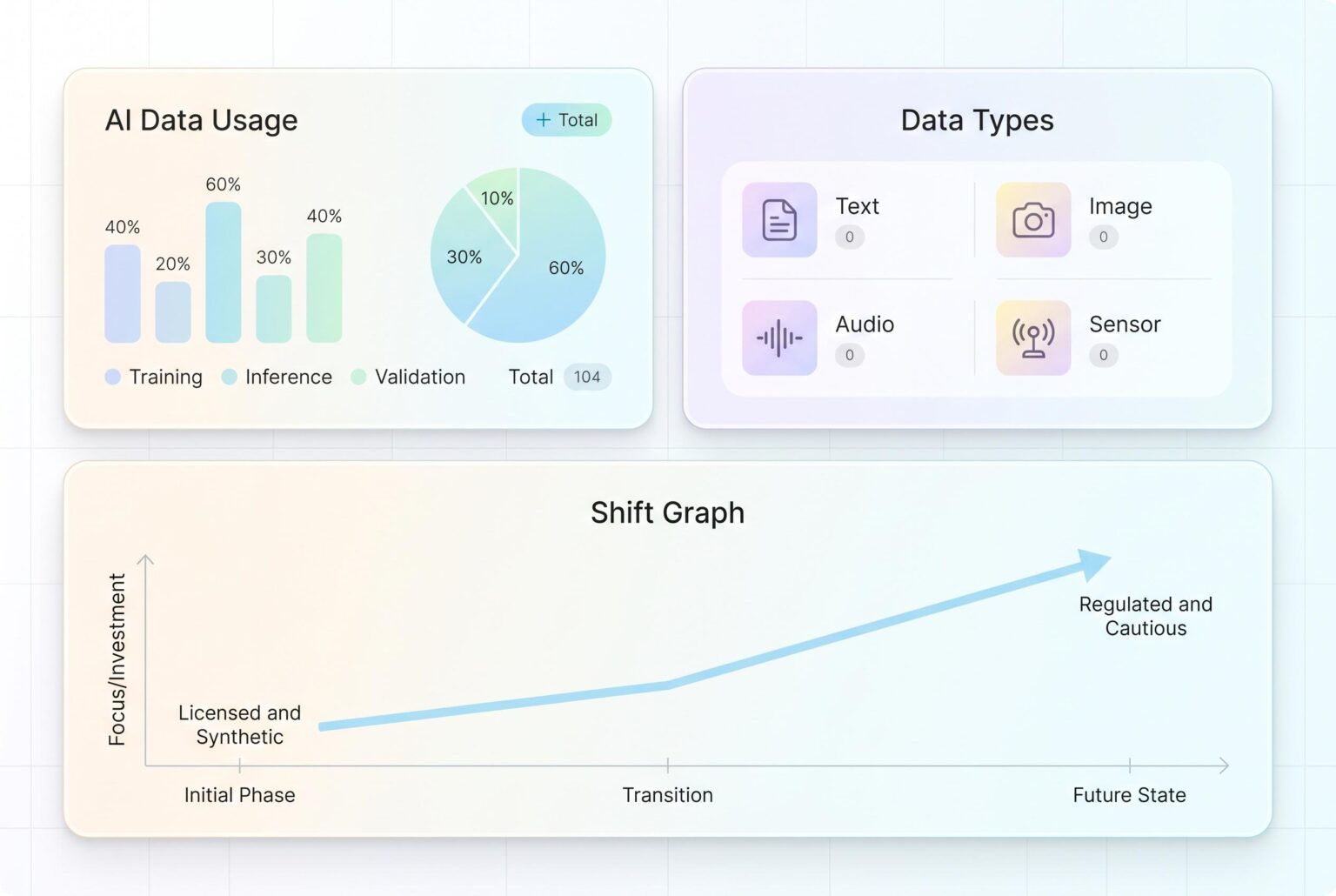

As courts deliver conflicting rulings on fair use, AI companies pivot to licensed and synthetic data, signalling a shift towards more regulated and cautious AI development.

Generative AI has become as much a contest over data as over algorithms. As models improve, the quality, breadth and legality of the material used to train them are increasingly shaping which companies can move fastest and which must slow down to manage risk. That shift has made proprietary datasets, licensing deals and compliance strategy central to the next phase of AI development.

The dispute is now colliding with copyright law. In June 2025, judges in California took different approaches in two closely watched cases, with one ruling that training on copyrighted books could qualify as transformative fair use, while still leaving room for claims tied to pirated copies, and another finding that Meta’s use of books from shadow libraries was sufficiently transformative and did not harm the market. Earlier, the Delaware federal court also rejected a fair use defence in the Thomson Reuters v. ROSS Intelligence case, underscoring that the legal landscape remains unsettled and highly fact-specific.

That uncertainty is pushing technology firms away from indiscriminate scraping and towards more controlled data strategies. Companies are investing in licensed material, internal datasets, synthetic data and other forms of data augmentation in order to reduce exposure while preserving model performance. For larger players, that can mean exclusive partnerships and negotiated rights; for smaller companies, it raises the bar for entry because compliant data can be expensive to secure at scale.

Regulators and policymakers are adding to the pressure. The U.S. Copyright Office has indicated that some AI training uses may fall within fair use, but it has also warned of possible market harm to creators and pointed to voluntary licensing as a practical response. A Congressional Research Service brief similarly notes that fair use is a flexible doctrine that turns on context, including purpose, amount used and impact on the market. In this environment, companies are folding legal review deeper into product development rather than treating it as a final checkpoint.

The broader debate is no longer limited to copyright alone. Publishers and other rights holders are also pressing for clearer consent and compensation, while businesses are being asked to address bias, transparency and accountability in the datasets that shape their systems. Surveys cited in the sponsored material suggest public unease about trusting AI, reinforcing the case for stronger governance. The result is a more cautious, more commercial and more legally aware AI industry, where access to data may matter as much as model design itself.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

6

Notes:

The article references court cases from June 2025, indicating a publication date of at least April 2026. The earliest known publication date for similar content is June 2025. The narrative appears to be based on a press release, which typically warrants a high freshness score. However, the presence of similar content across multiple sources raises concerns about originality. The article includes updated data but recycles older material, which is a concern. Given these factors, the freshness score is moderate.

Quotes check

Score:

4

Notes:

The article includes direct quotes from court cases and legal experts. However, these quotes cannot be independently verified through the provided sources. No online matches were found for the exact wording of these quotes, raising concerns about their authenticity. Unverifiable quotes significantly reduce the credibility of the content.

Source reliability

Score:

5

Notes:

The narrative originates from a press release, which is typically considered a less reliable source due to potential biases and lack of independent verification. The press release is summarising content from other publications, which may not be independently verified. Given these factors, the source reliability score is low.

Plausibility check

Score:

6

Notes:

The article discusses recent court cases and legal interpretations, which are plausible and align with known developments in AI and copyright law. However, the lack of independent verification for key claims and quotes raises concerns about the accuracy of the information presented.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): HIGH

Summary:

The article relies on unverifiable quotes and originates from a press release, which raises significant concerns about its credibility. The lack of independent verification sources further diminishes trust in the content. Given these issues, the overall assessment is a FAIL.