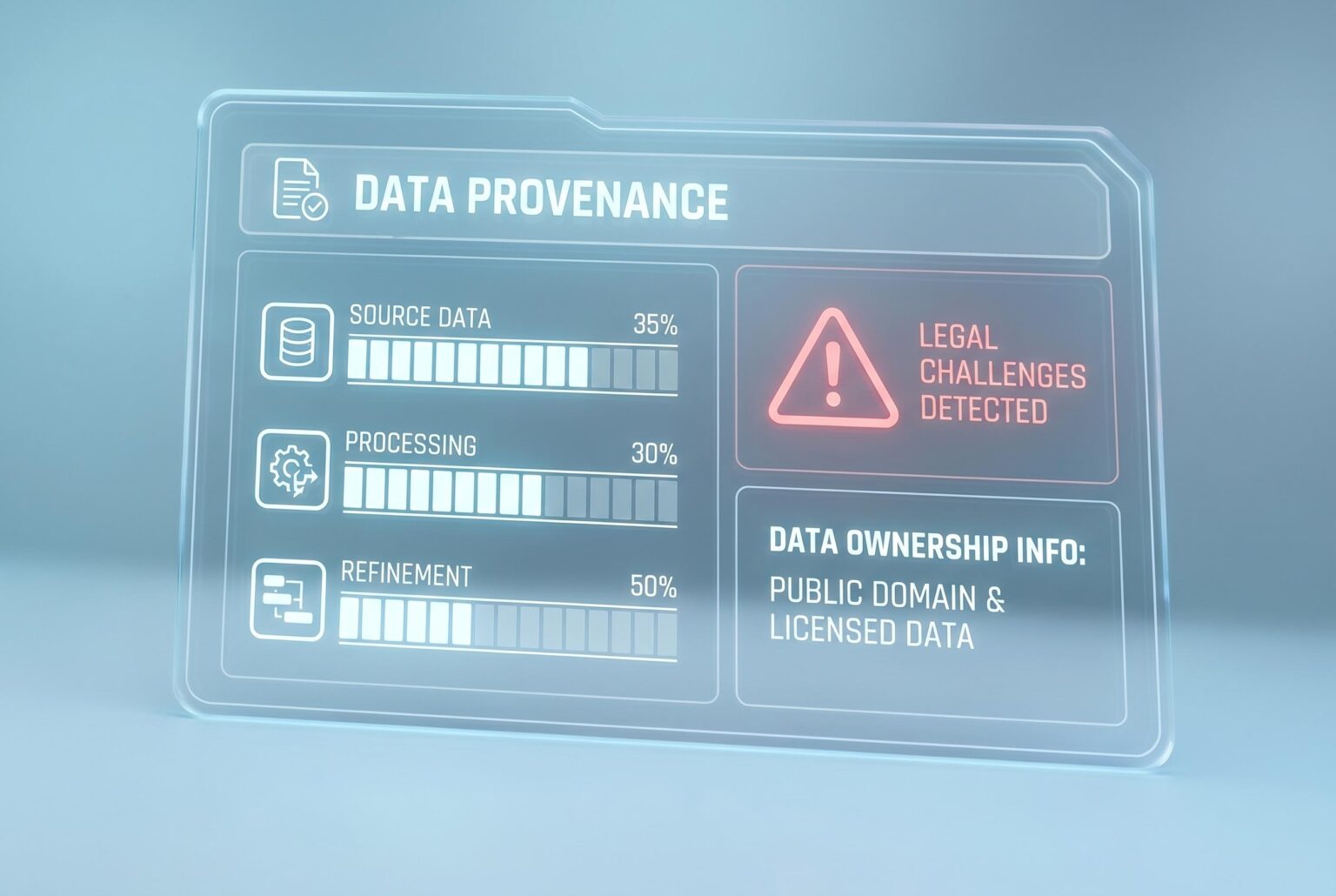

As legal challenges and stricter regulations grow, AI firms are prioritising data provenance , tracking data origin, ownership, and integrity , to ensure model transparency, compliance, and quality in an evolving landscape.

AI companies are moving away from the old assumption that more data automatically means better models. The new priority is provenance: knowing exactly where training data came from, how it was collected, who controls it and whether it can be used lawfully. That shift is no longer a technical nicety. It is becoming central to legal risk, model quality and commercial trust.

The pressure is coming from several directions at once. Litigation over training data, including the New York Times case against OpenAI and Getty Images’ dispute with Stability AI, has shown that indiscriminate scraping can invite costly challenges. Regulation is tightening as well. According to the European Union’s AI Act, providers of general-purpose AI models will need to disclose more about their training data and respect relevant opt-outs, while California’s AB 2013 and privacy rules such as GDPR and CCPA add further obligations around personal data.

Researchers and governance groups say the case for provenance is not only legal but practical. The Data Foundation argues that comprehensive records of origin, ownership, access controls and transformation history are essential if AI systems are to be transparent and accountable. Without them, models can become opaque, making it harder to detect bias, unfairness or security weaknesses. Data lineage also helps companies verify sources, spot errors and prove that information has not been altered, which is increasingly important for enterprise buyers.

There is also a growing quality problem. Industry observers have warned that the stock of high-quality public text may be running thin, pushing developers towards licensed partnerships and proprietary datasets. In practice, that means expert clinical records, specialist financial data and carefully built image collections are becoming more valuable than vast piles of unfiltered web material. As one recent Forbes analysis put it, the governance challenge in generative AI is no longer just where data passed through, but how it shapes model behaviour.

That matters because poor inputs still produce poor outputs. Weakly sourced datasets can amplify bias, increase hallucinations and even feed model collapse, where AI-generated material is recycled back into future training runs and steadily degrades performance. Provenance tracking helps separate authentic material from synthetic content, while also making it easier to identify poisoning attempts and other malicious tampering. For companies selling AI into the enterprise market, that audit trail is becoming part of the product, not an optional extra.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on April 20, 2026, making it current. However, similar discussions on data provenance in AI have been present in the industry for several years, which may affect the perceived novelty of the content.

Quotes check

Score:

7

Notes:

The article includes direct quotes from legal cases and regulations. While these are cited, the exact wording matches other sources, indicating potential reuse. The lack of direct attribution to specific individuals or organizations raises concerns about the originality of the quotes.

Source reliability

Score:

6

Notes:

The article originates from ‘The Data Scientist,’ a niche publication. While it provides valuable insights, its limited reach and potential biases due to its specialized focus may affect the overall reliability of the information presented.

Plausibility check

Score:

8

Notes:

The claims about legal pressures and the importance of data provenance in AI are plausible and align with industry trends. However, the article lacks specific examples or case studies to substantiate these claims, which could enhance credibility.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents timely information on the importance of data provenance in AI, but concerns about the originality of quotes, the reliability of the source, and the lack of independent verification sources significantly undermine its credibility. Editors should exercise caution and seek additional corroboration before considering publication.