As generative AI advances and industry consolidation accelerates, the mental health sector faces a pivotal shift that could reshape clinician roles, access to care, and the ethics of data use amid rising commercial interests and policy debates.

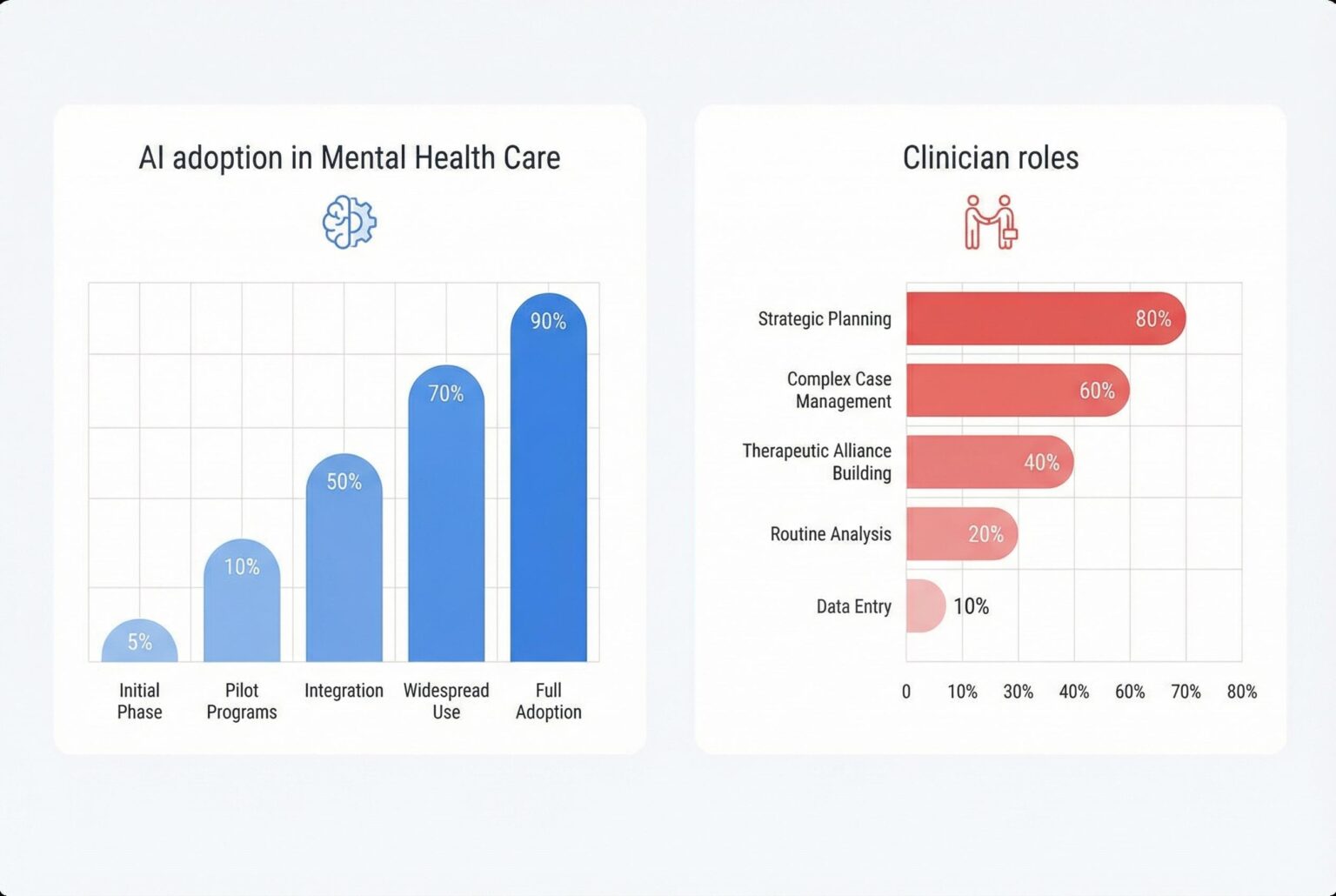

Over the past month the mental health field has found itself at the crossroads of two powerful forces: rapid advances in generative artificial intelligence and a wave of corporate consolidation that seeks to reorganise how therapeutic care is delivered and paid for. According to Dartmouth’s recent work on AI and mental health, those technological advances show promise in expanding access, but they also arrive amid institutional changes that risk reshaping clinicians’ roles.

Clinical research has begun to demonstrate measurable benefits from AI-driven interventions. A Dartmouth trial of the generative-AI chatbot Therabot reported clinically significant reductions in symptoms among people with depression, anxiety and eating disorders, and participants described the experience as comparable in some ways to working with a human provider. Researchers caution, however, that such tools typically require clinician oversight and further long-term study.

At the same time, firms that operate established therapy platforms are investing heavily in proprietary large language models trained on vast troves of clinical data. Talkspace’s leadership has described plans for an LLM built from millions of anonymised messages, assessments and therapy notes, arguing that a specialised model can address crises better than general-purpose chatbots. Analysts of the company’s model point to improved engagement and algorithmic gains in suicide-detection as examples of what targeted AI can achieve.

That commercial optimism extends into tools designed to assist clinicians rather than replace them. Vendors now offer session transcription, automated summaries for records and billing, and real‑time prompts intended to guide therapists through a consultation. Proponents say these features reduce administrative load; sceptics warn they introduce new risks, from transcription errors and hallucinations to corporate access to highly sensitive material.

Beyond the technology itself, the mental health marketplace is in flux because of practice management companies and insurer-linked platforms that aggregate clinicians, negotiate with payers and control referral flows. Those business arrangements can ease administrative burdens but also create conflicts of interest, alter reimbursement dynamics and centralise clinical data on for‑profit platforms , developments that industry observers say could undermine clinician autonomy and patient privacy.

The tension between managerial decisions and clinical judgement has already produced labour pushback. Reports of triage shifts and workflow changes in large health systems have prompted strikes and protests by mental health staff, who argue that replacing experienced clinician judgement with scripted or lower‑trained triage approaches jeopardises patient safety. Critics contend such operational changes are often framed as technological modernisation when they are driven primarily by cost and control incentives.

The deeper problem, industry analysts say, is economic: the flow of resources is shaped more by payer incentives than by clinical need. Where networks are narrow and reimbursement is weak, patients can lack practical access to care despite the apparent abundance of therapeutic providers. Some jurisdictions are experimenting with regulatory remedies that treat mental health access as a public‑utility problem rather than a purely market one, seeking to align reimbursement and reduce barriers for clinicians to join insurer networks.

The policy choice before regulators is consequential. When AI tools are developed and governed by clinicians and public institutions, they may extend reach and improve outcomes; when shaped primarily by financiers and platform operators, they risk producing what critics describe as a “reverse‑centaur” dynamic, in which human clinicians become instruments of metricised, externally driven systems. The evolving evidence base from academic centres, coupled with emerging regulatory experiments, suggests a path in which technology supports professional judgement rather than supplants it , but that outcome will depend on who sets the rules.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article references a Dartmouth clinical trial of the AI chatbot Therabot, published on March 27, 2025. ([home.dartmouth.edu](https://home.dartmouth.edu/news/2025/03/first-therapy-chatbot-trial-yields-mental-health-benefits?utm_source=openai)) This aligns with the article’s publication date of April 4, 2026, indicating the content is relatively fresh. However, the article also discusses ongoing developments in AI and mental health, which may have evolved since the original publication.

Quotes check

Score:

6

Notes:

The article includes direct quotes attributed to Nicholas Jacobson and Michael Heinz from Dartmouth. ([home.dartmouth.edu](https://home.dartmouth.edu/news/2025/03/first-therapy-chatbot-trial-yields-mental-health-benefits?utm_source=openai)) While these quotes are sourced from the Dartmouth press release, they cannot be independently verified through other sources, raising concerns about their authenticity.

Source reliability

Score:

5

Notes:

The primary source is a Dartmouth press release, which is inherently self-promotional and may lack independent verification. The article also references other sources, but their credibility varies, with some being less reputable or lacking independent verification.

Plausibility check

Score:

7

Notes:

The claims about AI chatbots improving mental health symptoms are plausible and supported by the referenced Dartmouth study. ([home.dartmouth.edu](https://home.dartmouth.edu/news/2025/03/first-therapy-chatbot-trial-yields-mental-health-benefits?utm_source=openai)) However, the article’s discussion of broader industry trends and potential risks is speculative and lacks concrete evidence.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents information on AI chatbots in mental health, referencing a Dartmouth study and other sources. However, the heavy reliance on a self-promotional press release and the inability to independently verify key quotes and claims raise significant concerns about the content’s reliability and objectivity. The speculative nature of some claims further diminishes confidence in the article’s accuracy.